In this blog, we explore five clear signs that indicate your manufacturing operation has outgrown manual data management and needs a process data historian. These warning signals, from teams spending hours recreating historical context to compliance audits creating constant stress, reveal when the absence of systematic process data management has become a serious operational liability. Recognizing these signs early means you can address them proactively rather than waiting for a crisis to force your hand.

Fast, scalable data historian at a fraction of the cost. Check out the dataPARC Historian.

Recognizing the Tipping Point

Many manufacturing operations run for years without a process data historian, relying instead on SCADA trend buffers, manual data logging, and institutional knowledge. This approach works fine when operations are simple, staff is stable, and nobody asks difficult questions about what happened last month. But as production scales, processes become more complex, and competitive pressures intensify, the cracks in manual data management begin to show.

The challenge is recognizing when you’ve crossed the line from “we’re managing okay” to “we’re losing money and capability every day we don’t have a historian.” The transition happens gradually. Engineers spend a bit more time hunting for data. Quality investigations take longer. Compliance audits become more stressful. Before you realize it, the absence of systematic process data management has become a serious operational liability.

If you’re experiencing any of these five signs, your manufacturing operation has likely reached the tipping point where a process data historian stops being a nice-to-have and becomes essential infrastructure. Recognizing these signals early means you can address them proactively rather than waiting for a crisis to force your hand.

Sign 1: Your Team Spends Hours Recreating Historical Context

The most obvious sign you need a process data historian is when answering simple questions becomes a time-consuming archaeological expedition. An engineer asks, “What was our reactor temperature profile during that batch last Tuesday?” What should take 30 seconds instead consumes half a day.

The engineer logs into the DCS and discovers the trend buffer only keeps 72 hours of data. Tuesday’s information is gone. Next, they check if anyone exported the data manually. Maybe someone did, maybe they didn’t. Files get searched. Colleagues get interrupted. Eventually, someone finds a spreadsheet with partial data, but it’s missing key variables needed for complete analysis. The question that should have taken seconds to answer requires hours of effort and still produces incomplete results.

This pattern repeats constantly. Troubleshooting equipment issues requires reconstructing operating conditions from multiple disconnected sources. Validating process improvements means manually exporting data from several systems, aligning timestamps, and hoping nothing important was missed. Comparing this month’s performance to last quarter involves building custom spreadsheets because historical data isn’t systematically accessible.

Your engineering talent gets wasted on data archaeology instead of analysis. The time spent gathering information exceeds the time spent understanding it. Questions go unanswered not because the data never existed, but because retrieving it is too difficult. Every hour your team spends hunting for historical context is an hour not spent optimizing processes, solving problems, or driving improvements.

When data gathering becomes the bottleneck to decision-making, you’ve outgrown manual approaches. A process data historian eliminates this waste by making historical context instantly accessible.

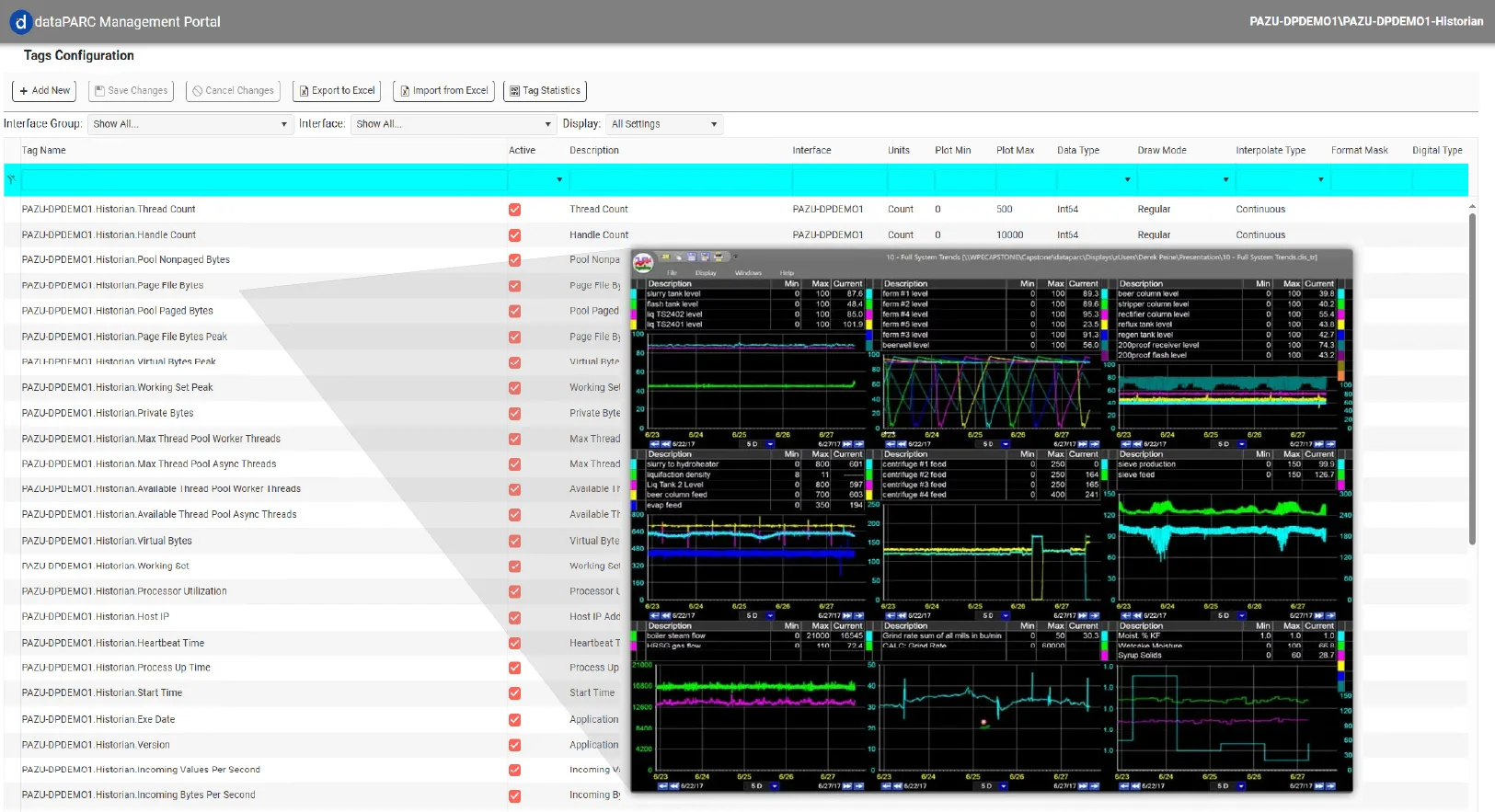

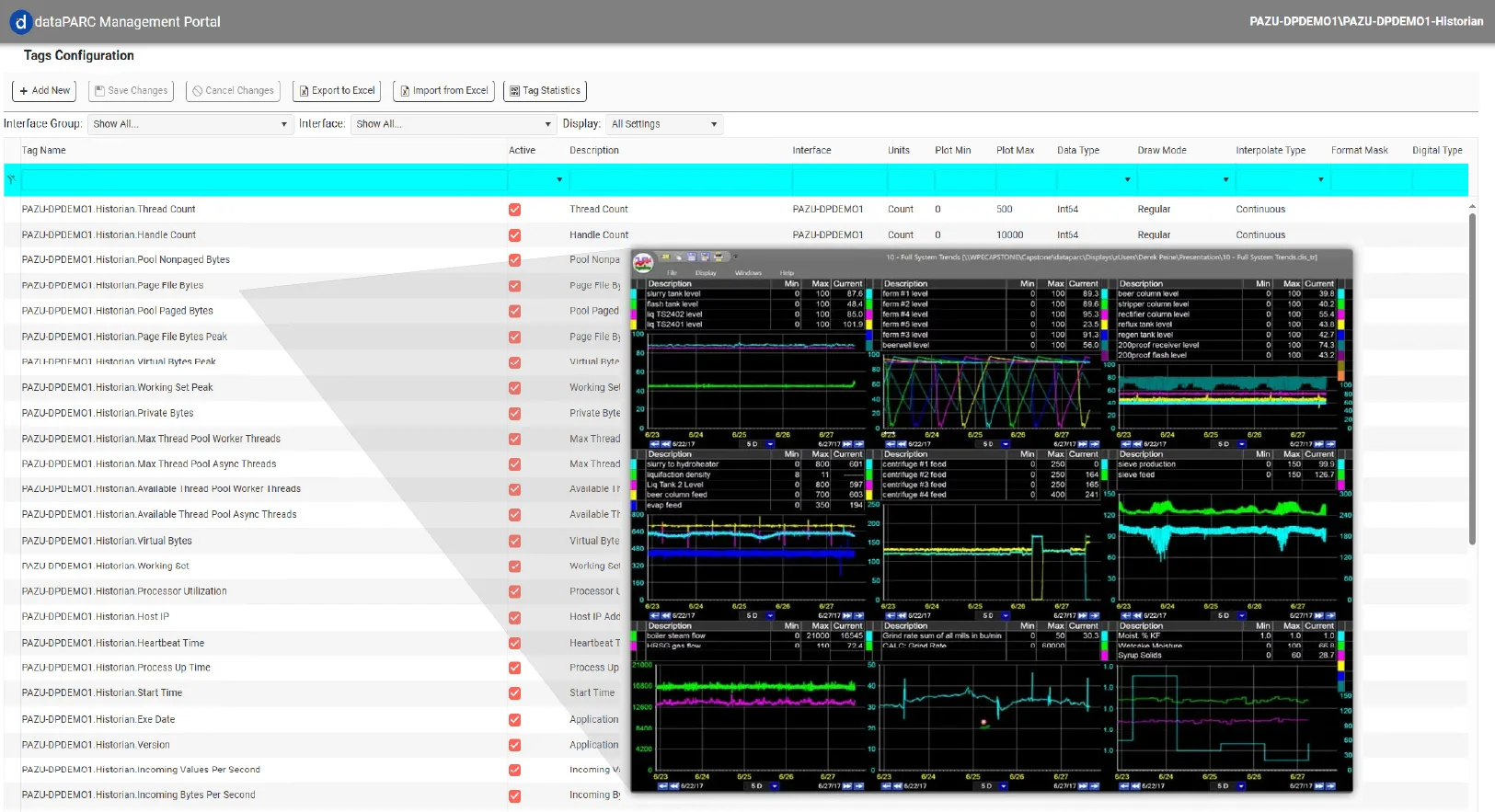

Having a data historian and visualization platform allows users to find and navigate historical data in a matter of seconds.

Sign 2: You’re Making Decisions Without Complete Information

Critical process data has a shelf life in systems without historians. SCADA buffers overwrite after days or weeks. PLC memory is limited. Manual logging captures snapshots but misses continuous trends. The data exists briefly, then disappears before anyone analyzes it thoroughly. Truly stage one of digital transformation for process industries.

This creates a dangerous pattern where decisions get made based on incomplete information. An operator notices efficiency declining but can’t compare current performance to last month because that data no longer exists. A quality engineer investigates a defect but lacks visibility into process conditions from when the problem actually occurred. Management debates whether a recent process change improved yield, but historical baselines are unavailable for comparison.

Teams adapt by making decisions based on recent memory rather than long-term patterns. “I think we usually run hotter than this” becomes the basis for adjustments instead of “historical data shows our optimal range is X.” Gut feel replaces data-driven certainty because the data simply isn’t available when needed. Experience and intuition are valuable, but they shouldn’t substitute for facts when those facts should be readily accessible.

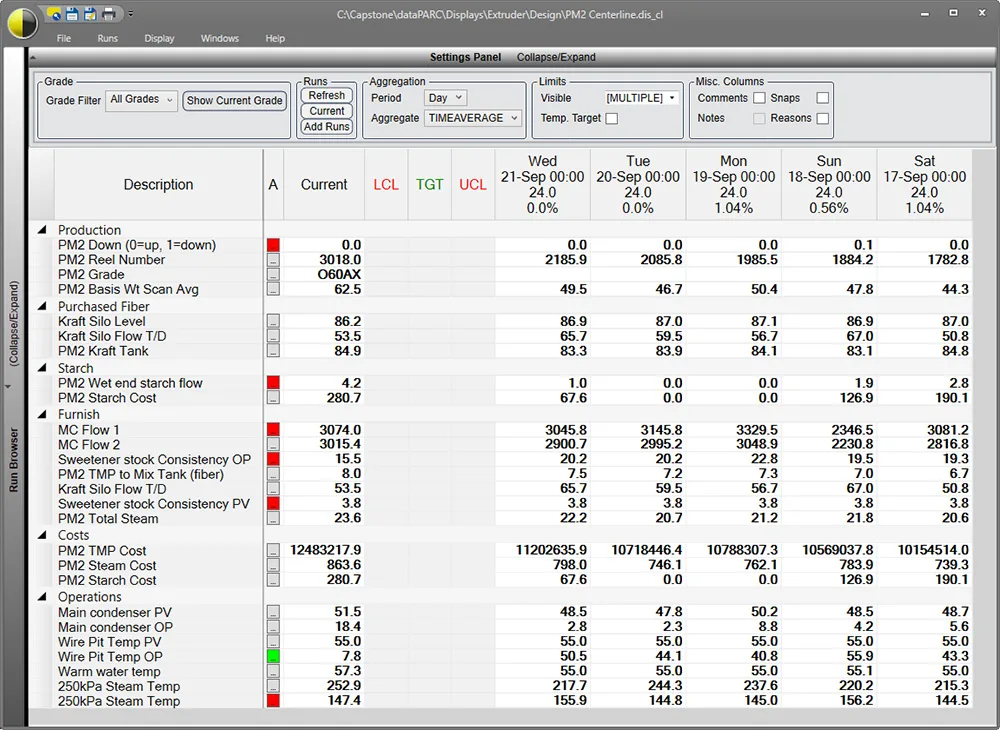

An optimization display in dataPARC called Centerline can compare production runs from the past to the current production run, so there is no guessing about how it was last time.

Quality investigations suffer particularly. Root cause analysis requires understanding what was different when problems occurred compared to normal operation. Without comprehensive historical data, investigations rely on theories and assumptions rather than evidence. The same issues recur because their true causes were never definitively identified.

The cost of incomplete information compounds over time. Suboptimal processes continue because improvement opportunities aren’t visible. Mistakes get repeated because the lessons from past incidents weren’t captured. Your operation never reaches its potential because decisions lack the data foundation they deserve.

A process data historian ensures complete information is always available when decisions need to be made, transforming gut-feel operations into data-driven excellence.

Sign 3: Regulatory Compliance Creates Constant Stress

For regulated industries like pharmaceuticals, food and beverage, or chemical manufacturing, compliance isn’t optional. Regulators and auditors demand proof that processes remained within specifications, that quality standards were maintained, and that any deviations were detected and addressed appropriately. Providing this proof without a process data historian turns every audit into a crisis.

The auditor requests temperature records for a specific production batch from three months ago. Without a historian, someone starts searching. Were temperature values logged manually? Where are those paper logs or spreadsheets? Are they complete? Do they cover the entire batch duration? Can you prove these records weren’t modified after the fact? The scramble begins, and the stress levels rise as teams try assembling documentation from fragmented sources.

Proving compliance shouldn’t take days of effort assembling documentation. When it does, valuable production time gets lost. Staff gets pulled from their regular duties to support audit preparation. The focus shifts from running excellent processes to desperately proving you ran them acceptably in the past. This reactive, stressful approach to compliance is unsustainable.

A process data historian transforms compliance from a source of stress into a straightforward administrative task. Automated data collection ensures completeness. Timestamps and audit trails prove authenticity. Reports are generated in minutes instead of days, and confidence replaces anxiety.

Fast, scalable data historian at a fraction of the cost. Check out the dataPARC Historian.

Sign 4: Process Knowledge Walks Out the Door When People Leave

Your most experienced operators know exactly how to handle unusual situations. They recognize subtle patterns that indicate developing problems. They understand which process adjustments work best under different conditions. This knowledge took years to accumulate through direct experience. And it exists almost entirely in their heads.

When these veterans retire or move on, that institutional knowledge disappears with them. New employees start from scratch, lacking access to the patterns and insights that experienced operators developed over decades. Training becomes difficult because the teaching relies on stories and anecdotes rather than concrete historical examples that demonstrate what good operation looks like versus problematic operation.

Without systematic process data capture, there’s no way to codify and preserve operational expertise. You can’t show new engineers how the plant responded to similar situations in the past. You can’t validate that a proposed process change aligns with or contradicts historical performance patterns. The organizational learning that should accumulate over time instead resets with each personnel change.

Manufacturing excellence requires continuous learning, and learning requires memory. A process data historian serves as organizational memory, capturing and preserving the patterns and insights that would otherwise exist only in people’s heads. New employees can study historical data to accelerate their learning. Process improvements can be validated against years of operational history. Knowledge accumulates instead of evaporating.

When losing a key person creates a knowledge crisis, you need better systems for capturing and preserving process intelligence. That system is a process data historian.

Manual downtime logs are incomplete, inconsistent, and impossible to analyze at scale. dataPARC tracks every event: process conditions when they occurred, duration, reason codes, and comments. Finally, downtime data you can actually use.

Sign 5: You Can’t Prove Your Process Improvements Actually Work

Your team implements a process change aimed at improving energy efficiency. Anecdotally, it seems to be working. Operators report things are running smoother. Utility bills might be lower, though it’s hard to say for certain because seasonal factors and production mix changes make comparisons difficult. Management asks for proof that the improvement delivered value. You can’t provide it convincingly.

Without comprehensive historical data, before-and-after comparisons rely on incomplete information and anecdotal evidence. You remember roughly where energy consumption used to be, but you can’t produce precise baselines that account for production rate variations, ambient conditions, and product mix. The claimed improvement might be real, or it might be a coincidence and wishful thinking. Nobody knows for certain.

This uncertainty kills continuous improvement momentum. Why invest time and effort in optimization projects if you can’t prove they work? Why pursue efficiency initiatives if the benefits remain unquantifiable? Teams become reluctant to change anything because success can’t be measured and celebrated, while failures might not even be recognized until significant damage occurs.

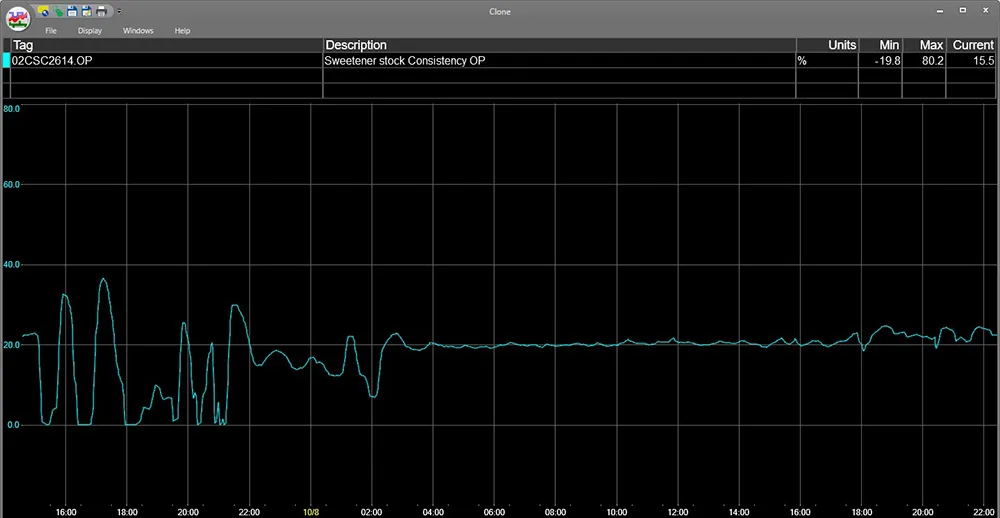

In this trend, we can see that the process was noisy, but after an adjustment, it leveled out.

Capital investment justifications become difficult. You want to upgrade equipment based on expected performance improvements, but ROI calculations require assumptions rather than data-backed projections. Finance departments rightly question investments when the business case relies on “we think this will help” rather than “historical data shows this opportunity exists and here’s the quantified benefit.”

A process data historian transforms improvement initiatives from faith-based exercises into data-driven projects with measurable outcomes. You can establish precise baselines, measure changes accurately, and prove definitively whether improvements delivered expected benefits. Success gets quantified and celebrated. Continuous improvement becomes truly continuous because you can measure what’s working.

What a Process Data Historian Solves

A data historian addresses all five of these pain points by providing automatic, continuous, long-term storage of process data with tools that make that data easily accessible and actionable.

Automatic data collection

Automatic data collection eliminates manual logging and the gaps that come with it. Every sensor, every process variable, every equipment status indicator gets captured continuously at rates matching your process dynamics.

Long-term storage

Long-term storage means historical context is always available. Whether you need data from yesterday, last month, or three years ago, it’s instantly accessible. Troubleshooting investigations start with complete information. Compliance audits are supported with comprehensive records. Process comparisons span months or years instead of being limited to recent memory.

Built-in analysis tools

Built-in analysis tools turn data into insights without requiring custom programming or spreadsheet gymnastics. Trending interfaces let engineers visualize process behavior over any timeframe. Statistical calculations reveal patterns and correlations.

Conclusion: The Cost of Waiting

These five signs don’t improve on their own. Data accessibility problems worsen as operations grow more complex. Compliance risks increase as regulatory scrutiny intensifies. Knowledge loss accelerates as experienced staff approach retirement. The longer you wait to implement a process data historian, the more capability and competitive advantage you sacrifice.

Early adoption prevents problems rather than solving crises. Organizations that implement historians proactively build data-driven cultures from positions of strength. Those who wait until pain becomes unbearable implement historians reactively under pressure, often after expensive mistakes have already occurred. The difference in outcomes is substantial.

The good news is that modern process data historians like dataPARC are designed for straightforward implementation. Integration to existing systems is comprehensive and proven. Configuration is intuitive. Users find the interfaces accessible without extensive training. Value starts flowing within days or weeks, not months or years.

If you recognize your operation in one or more of these signs, you’ve reached the point where a process data historian stops being a future consideration and becomes an immediate priority. The capabilities you’re missing, and the inefficiencies you’re tolerating have real costs measured in wasted time, missed opportunities, and unnecessary risk.

The question isn’t whether you’ll eventually need a process data historian. The question is whether you’ll implement one proactively to capture value and prevent problems, or reactively after those problems have already cost you dearly.

FAQ Process Data Historians

- What is a process data historian?

A process data historian is specialized software that continuously collects, compresses, and stores time-series process data from sensors and control systems in manufacturing operations. dataPARC is a process data historian and integrates with visualization and analysis tools, letting users access and analyze historical data through the same intuitive interface they use for real-time monitoring. - How is a historian different from a SCADA system or database?

SCADA systems focus on real-time monitoring and control with limited data retention (typically hours or days), while general databases aren’t optimized for high-frequency time-series data. They struggle with data compression and query performance. Process historians, like dataPARC are purpose-built for long-term storage of continuous process data, using specialized compression algorithms and indexing that make retrieving years of high-resolution data fast and efficient. For more, check out Historians vs. SCADA for Manufacturing Analytics. - Does dataPARC support store-and-forward capabilities?

dataPARC uses store-and-forward technology to ensure data integrity and prevent loss during network or server disruption. - Can a new historian backfill my old historian data?

Although we can’t speak for every historian, the dataPARC historian can backfill old historian data. So your site will maintain its data even when switching systems.

Building The Smart Factory

A Guide to Technology and Software in Manufacturing for a Data-Drive Plant